I’m currently the Chief Evangelist @ HumanFirst. I explore & write about all things at the intersection of AI & language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.

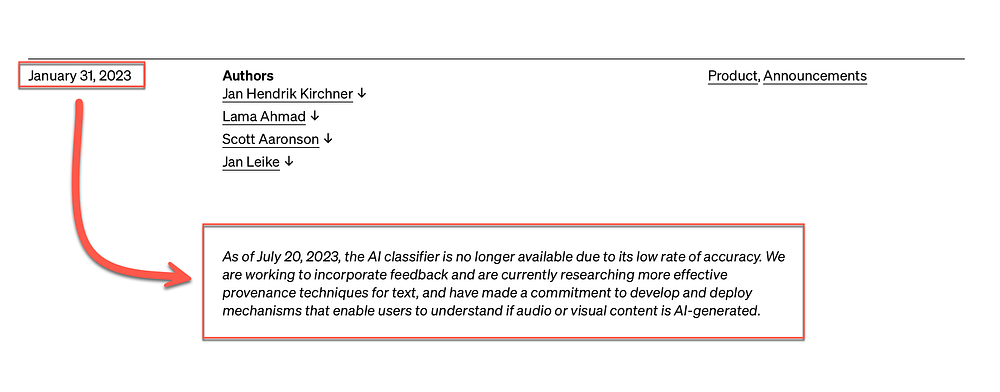

AS seen in the extract below, a paragraph was added to the document which announced the classifier, barely 5 months after launching. This article considers why this type of classification is hard, and how inaccurate it was in the first place.

LLMs are flexible and highly responsive to requests. An LLM can be asked to respond in such a way that the response seems human and not machine written.

An LLM might also be asked to write in such a way to fool an AI detector in believing it's human written, or sound like a particular personality or type.

Hence the system is based on word sequences and choices.

Any stringent approach like watermarking the LLM output somehow by hashing and storing every produced output section, along side generated date and location, then let institutions query this with a final doc based on a geo-code and a time window is completely unfeasible. Also considering the advent of open-source LLMs and the extent to which models can be fine-tuned.