Introduction

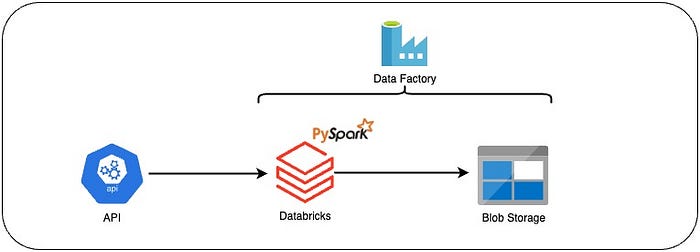

In today’s era of data-driven decision-making, a well-architected data pipeline is pivotal for any business. It not only empowers businesses to process large volumes of data but also delivers actionable insights in a timely manner. In this article, we’ll guide you through building a complete end-to-end data pipeline using Databricks, Azure Blob Storage, and Azure Data Factory, revolving around the API used in this previous article linked here.

Building an End-to-End Data Pipeline — Part 1 with Airflow and Python

Introduction

Prerequisites

To follow this tutorial, you will need:

- An Azure account.

- Basic knowledge of PySpark

- Databricks