This story explores automatic differentiation, a feature of modern Deep Learning frameworks that automatically calculates the parameter gradients during the training loop. The story introduces this technology in conjunction with practical examples using Python and C++.

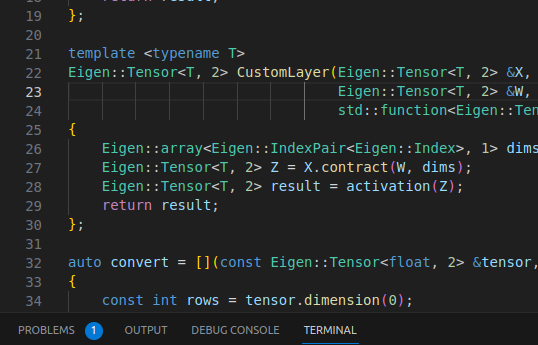

Figure 1: Coding Autodiff in C++ with Eigen

Roadmap

- Automatic Differentiation: what is, the motivation, etc

- Automatic Differentiation in Python with TensorFlow

- Automatic Differentiation in C++ with Eigen

- Conclusion

Automatic Differentiation

Modern frameworks such as PyTorch or TensorFlow have an enhanced functionality called automatic differentiation [1], or, in short, autodiff. As its name suggests, autodiff automatically calculates the derivative of functions, reducing the responsibility of developers to implement those derivatives themselves.