If you are just looking for a short tutorial that explains how to build a simple LLM application, you can skip to section “6. Creating a Vector store”, there you have all the code snippets you need to build up a minimalistic LLM app with vector store, prompt template and LLM call.

Intro

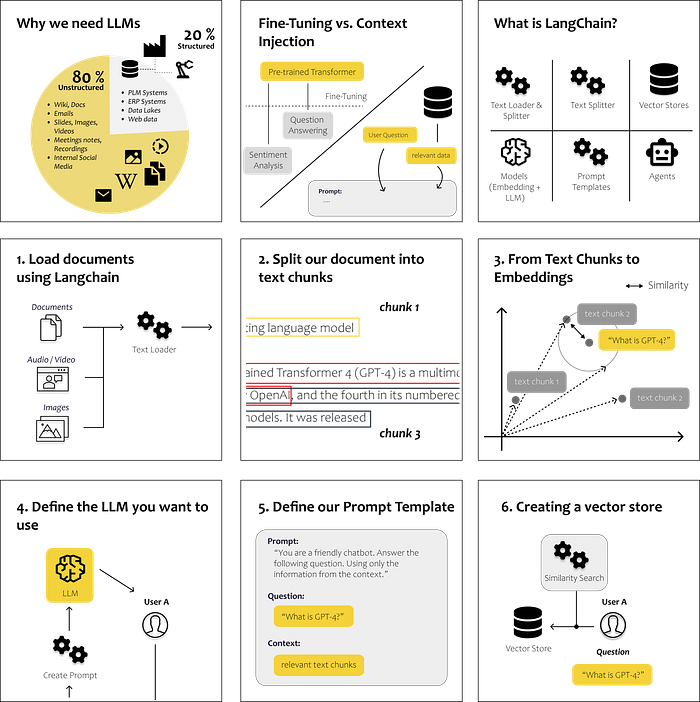

Why we need LLMs

Fine-Tuning vs. Context Injection

What is LangChain?

Step-by-Step Tutorial

1. Load documents using LangChain

2. Split our Documents into Text Chunks

3. From Text Chunks to Embeddings

4. Define the LLM you want to use

5. Define our Prompt Template

6. Creating a Vector Store

Table of contents

Why we need LLMs

The evolution of language has brought us humans incredibly far to this day. It enables us to efficiently share knowledge and collaborate in the form we know today. Consequently, most of our collective knowledge continues to be preserved and communicated through unorganized written texts.

Initiatives undertaken over the past two decades to digitize information and processes have often focused on accumulating more and more data in relational databases. This approach enables traditional analytical machine learning algorithms to process and understand our data.