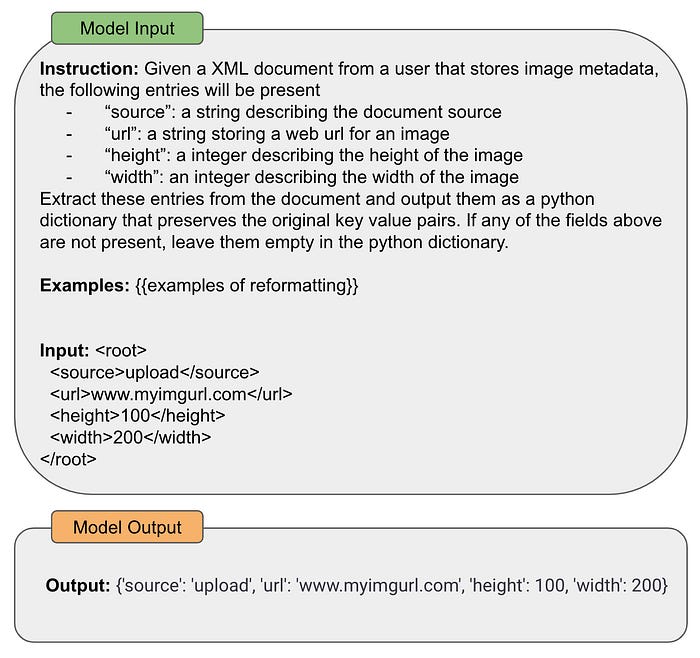

The popularization of large language models (LLMs) has completely shifted how we solve problems as humans. In prior years, solving any task (e.g., reformatting a document or classifying a sentence) with a computer would require a program (i.e., a set of commands precisely written according to some programming language) to be created. With LLMs, solving such problems requires no more than a textual prompt. For example, we can prompt an LLM to reformat any document via a prompt similar to the one shown below.

Using prompting to reformat an XML document (created by author)

As demonstrated in the example above, the generic text-to-text format of LLMs makes it easy for us to solve a wide variety of problems. We first saw a glimpse of this potential with the proposal of GPT-3 [18], showing that sufficiently-large language models can use few-shot learning to solve many tasks with surprising accuracy. However, as the research surrounding LLMs progressed, we began to move beyond these basic (but still very effective!) prompting techniques like zero/few-shot learning.