In the ever-evolving world of machine learning, choosing the right tool can sometimes feel like finding a needle in a haystack. Today, we’re diving deep into two popular approaches when working with large language models like GPT-4: RAG (Retrieval-Augmented Generation) and fine-tuning. Grab a cup of coffee, and let’s embark on this explorative journey together!

Introduction

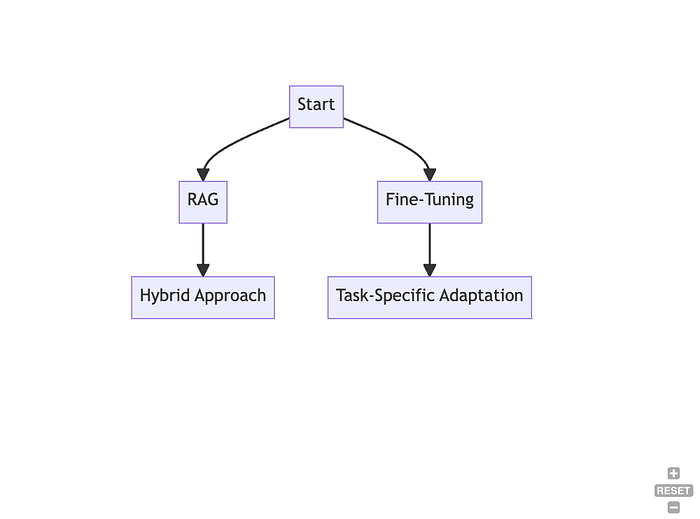

Before we dive in, let’s set the stage with a brief overview of what RAG and fine-tuning entail. Picture this: You’re standing at a crossroads, with one path leading to the world of RAG, a hybrid approach that combines the power of retriever systems and generative models, and another path leading to the realm of fine-tuning, a simpler yet highly effective method to tailor pre-trained models to specific tasks. Which path do you take? Let’s find out!

Retrieval-Augmented Generation (RAG)

A Closer Look

Imagine having a wise old sage at your disposal, pulling in knowledge from a vast library to craft well-informed responses. That’s RAG for you! It’s like having a knowledgeable friend who can fetch information from various sources to help generate more informed responses.