Llama 2, the latest innovation from Meta AI, a renowned AI research company, marks a new era in large language models (LLMs). Designed to excel across a wide range of natural language tasks, Llama 2 comprises a suite of pre-trained and fine-tuned models, spanning the spectrum from 7 billion to 70 billion parameters.

What sets Llama 2 apart is its array of advantages over existing LLMs:

- Broad and Diverse Knowledge Base: Llama 2’s training is drawn from a staggering 2 trillion tokens of text across diverse domains and languages. This foundation equips it with a wealth of comprehensive knowledge.

- Extended Context Length: Unlike its predecessor Llama 1, Llama 2 boasts double the context length. This enhancement empowers the model to handle lengthier texts while generating more coherent and contextually accurate responses.

- Superior Performance: Llama 2 outshines other open-source LLMs in various external benchmarks. It takes the lead in areas like reasoning, coding, proficiency, and knowledge tests.

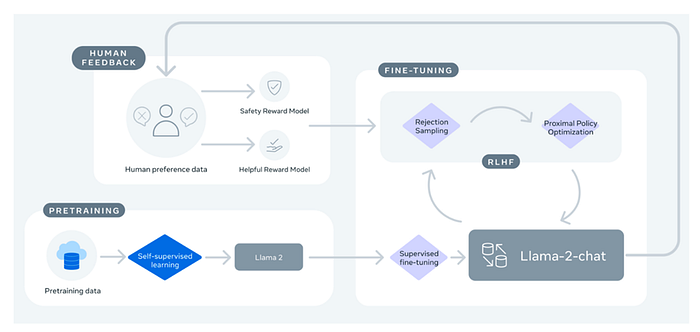

- Tailored Expertise: Llama 2 is honed for specific applications, such as dialogue, utilizing reinforcement learning based on human feedback. This meticulous fine-tuning ensures a safe and supportive experience for users.

And the best part? Llama 2 is freely available for both research and commercial purposes, making it a powerful resource for individuals, creators, researchers, and businesses. Meta AI’s commitment is to foster responsible experimentation, innovation, and scalability of ideas through LLMs.

In this article, we’ll walk you through the process of implementing this technique in a Google Colab notebook to craft your very own Llama 2 model, The link to the colab notebook is available at the end.